DOLLARS IN VICTORIES Personal Injury Law Firm, THE AMMONS LAW FIRM has won RECORD SETTING VERDICTS & SETTLEMENTS for clients nationwide.

We Have The Education, Experience & Resources Needed to Maximize Your Compensation.

-

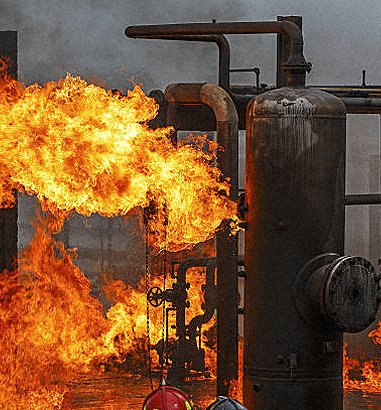

Plant Explosion $82.5 MILLION

Ammons’ client was attempting to start a hot oil heater when the heater exploded. A day later, the man died, leaving behind a widow and three minor children.

-

Workplace Injury & Wrongful Death $48.2 MILLION

The Ammons Law Firm secured settlements on behalf of families affected by a massive explosion that killed two construction workers and injured four others.

-

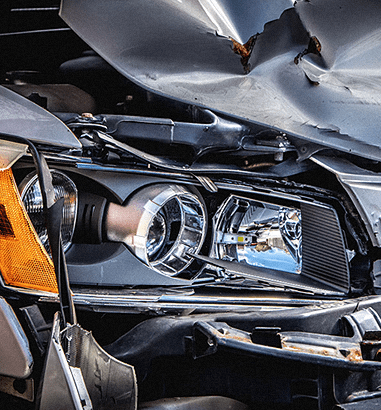

Product Defect $37.5 MILLION

The Ammons Law Firm recovered $37,500,000 on behalf of a client who was seriously injured by a defective vehicle.

-

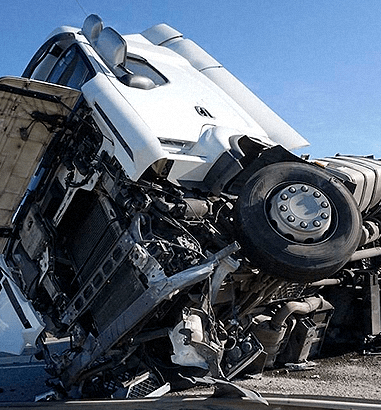

Tire Defect $33 MILLION

The Ammons Law Firm secured $33,000,000 on behalf of the family of a beloved community leader who was killed after a defective tire caused a head-on collision.

-

Traumatic Brain Injury $27.5 MILLION

The Ammons Law Firm secured $27,500,000 on behalf of a client who was seriously injured after a rear-end collision caused the client's vehicle to roll over.

The Ammons Law Firm

uniquely qualified to represent you

after a serious accident or injury.

Personal injury trial lawyer Rob Ammons has spent three decades prosecuting personal injury and wrongful death claims on behalf of catastrophically injured clients.

Nationally Recognized Personal Injury Trial Lawyers

At The Ammons Law Firm, we are personal injury trial attorneys who take great pride in working tirelessly for those we represent. Our core mission is to make a difference in our clients’ lives by providing best-in-class representation and holding all wrongdoers accountable for their actions. Our legal team concentrates all its efforts, experience, and resources on achieving justice for everyday folks who have suffered catastrophic losses.

Based in Houston, Texas, The Ammons Law Firm is nationally recognized as one of the top personal injury law firms in Texas and one of the nation’s preeminent auto defect law firms. Our Houston personal injury attorneys have decades of experience prosecuting complex personal injury lawsuits against corporate defendants in tire defect, truck accident, and product liability claims. Our work has led to the recovery of over one billion dollars, the recall of more than 100 million defective products, and the enactment of safer policies and procedures by corporations nationwide.

If you were injured, Contact the Ammons Law Firm today to learn how we can help you. There are NO FEES unless we win.

Rob Ammons and his team at The Ammons Law Firm are nationally recognized personal injury trial attorneys litigating complex tire and automotive defect cases. Rob’s work on defective tires and automotive products has led to the recall of over 100 million automobiles and tires.

Rob Ammons believes that being a trial lawyer is about making a difference. “Whether it is about meeting the lifetime needs of a catastrophically injured client, forcing the recall of a deadly consumer product or requiring a trucking company to enact stronger safety policies, it is making a difference that counts.” Ammons’ work and the courageous clients he has represented have exposed defects leading to the recall of nearly 100 million automobiles and tires.

Questions Commonly Asked to Our Houston Personal Injury Lawyers

-

What does a Houston Personal Injury Attorney do?

A Houston personal injury attorney provides legal representation to individuals who have been injured or harmed due to the negligence or wrongful actions of another party. This can be very complicated, depending on the accident and injuries. In short, a Houston personal injury attorney is responsible for helping their clients by:

- Consultation: A personal injury lawyer meets with people who have suffered an injury to evaluate their legal right to compensation under the law.

- Investigation: After accepting your case, a personal injury attorney will conduct a thorough investigation into the accident or incident that caused your injury. This involves gathering evidence, interviewing witnesses, and consulting with experts to determine liability and prove damages.

- Legal Representation: A personal injury lawyer handles communications with the defendants responsible for your accident and injuries and the insurance providers representing them.

- Settlement Negotiations: A personal injury lawyer will negotiate on your behalf to resolve your claim for a fair settlement.

- Litigation: Depending on your case and its complexity, settlement negotiations may not provide any value until after a formal legal proceeding has been initiated. A personal injury attorney will file a lawsuit on your behalf and oversee the litigation process to ensure your rights are protected under the law.

- Trial: If the wrongdoers responsible for your injuries will not accept responsibility and pay fair compensation, a personal injury attorney will take your case to trial, where a judge or jury will determine the merits of your claims.

-

How do you choose a Houston Personal Injury Attorney?

Choosing the right Houston personal injury attorney to represent you after an accident is an important decision that can greatly impact the outcome of your case. Some important considerations to take into account when selecting your attorney include:

Identify your needs: Like doctors, personal injury lawyers have different practice areas. For instance, at The Ammons Law Firm, we have substantial experience and are known nationwide for our work in truck accidents, plant explosions, auto & tire defects, and serious and catastrophic injuries. Our firm may be better suited to handle these types of cases than other law firms in the Houston area.

Conduct research: Conducting research into an attorney and law firm is essential. Past case success, peer reviews, industry awards and accolades, and client experiences can provide valuable insight when selecting a personal injury attorney.

Seek referrals: Asking friends and family for recommendations based on experience can help narrow your search to a select few attorneys that you can then research.

Evaluate resources: Review the past success of the personal injury attorney you are considering to get a better understanding of the firm’s financial capabilities. In complex cases, litigation costs can be substantial. Since most personal injury will front the costs of litigation, you need an attorney who can afford to take your case to trial.

Meet the attorney: Meet the attorney you are considering to better understand who they are and if they are a good fit for you.

-

Who is the best Houston Personal Injury Lawyer?

The best personal injury attorney in Houston is a subjective question that cannot be determined based on one person’s opinion. There are many industry resources and websites that provide information about successful attorneys and their qualifications. However, who the best injury lawyer is for you depends on your needs.

-

How can a Houston Personal Injury Attorney help me?

A personal injury attorney can help you receive fair and just compensation under the law by holding wrongdoers responsible for their mistakes. Under the law, you are entitled to compensation for injuries caused by another party’s negligence. While this is straightforward on paper, actually proving wrongdoing and the extent of your injuries is complex, especially when trained lawyers are actively helping the wrongdoer escape liability and minimize the effects of your injuries. To learn more about personal injury law and how an attorney can help you, visit our personal injury resource guide.

-

What does it cost to hire a Houston Personal Injury Attorney?

Our personal injury lawyers work on a contingency fee basis. This means that you are not charged a fee for our services when you hire us. Instead, at the end of your case, we receive a percentage of your total recovery. Under this contractual relationship, our interests are perfectly aligned, and you are only responsible for paying for our services if and when we win your case.

In the News

WE'VE RECOVERED OVER 1 BILLION DOLLARS FOR OUR CLIENTS

Featured Posts

Our attorneys have extensive experience helping clients nationwide who have been injured by the negligence of others.

-

- Joe C.

“Rob fought for me as no man has fought for me. The Ammons Law Firm is a law firm that seeks out justice and righteousness for those who have suffered, and I can now move forward in my life.”